Random thoughts while gazing at the misty AI Frontier

A bunch of random things I have been thinking about, some of which are probably wrong

I was originally going to write a long articulate post for each of the below, with lots of fancy graphic, charts, and detailed analysis. Then realized it is too much work. Instead, here is some human idea slop & random thoughts. Enjoy!

OAI and Anthropic are now at 0.1% of US GDP each. What % of GDP is AI revenue in 2030?

US GDP is roughly $30T. OpenAI and Anthropic are both rumored to be currently in the ball park of $30B of revenue run rate, or at 0.1% of overall GDP each. Through in clouds and other services and AI has grown from roughly zero to 0.25%-0.5% of US GDP in just a few years. If Anthropic and OpenAI hit $100B of revenue by EOY as many think they might, roughly 1% of GDP run rate will be from AI by end of 2026. This is insanely fast.

What % of GDP will AI be in 2030? 2035? How does the US economic base impact the slowing of AI impact? How much of the productivity gains ends up missing from GDP a la the missing productivity impact of the internet in the 2000s or IT in the 1980s and 1990s?

(Aside - If the impact of AI is mismeasured perhaps the wrong regulatory policies get implemented as a reaction as well - as AI gets blamed for only the bad (job losses) and not the good (new types of jobs, impact to education, healthcare…). Maybe the real ASI/Turing test is the ability to measure real world US GDP and productivity gains? :)

The AI research community just had a distributed IPO

When a company goes public many of the early employees may find themselves suddenly enriched. This may change behavior - people get distracted buying homes, chasing status or spouses, partying, or doing societal side quests. This does not apply to everyone, but a subset of people experience this.

Meta aggressively paying for talent changed the AI research talent market as the main labs had to match or provide large compensations increases to their researchers. Arguably, the AI research community just underwent the cross-company equivalent of an IPO as a cross section of the big labs & big tech. Somewhere between 50 to a few hundred people across all AI labs were granted huge sums of money as a reaction to Meta bidding on the best regarded researchers driving up everyones salaries.

Just like a traditional IPO, a subset of the members of that community are shifting some aspects of focus and lifestyle, checking out or getting distracted, while others stay the course. In general the AI community is very mission aligned around building AGI or focusing on AI for science.

Either way, an interesting new phenomenon has quietly occurred in Silicon where, instead of a company going public, a very specific slice of people effectively did. The top AI researchers became post-economic all at once. (Maybe the closest prior analogues is the early crypto HODLRs?).

Compute ceiling = artificial asymptote on near term model capabilities? Does this just re-enforce an oligopoly market for now?

We have seen amazing progress in model capabilities in the last few years. This has been reflected in the flowering of use cases + revenue for the main labs and app companies built on top.

At the same time, the labs are increasingly compute limited as one extrapolates out both training scale planned as well as future inference needs. Compute build outs seem at least in part to be limited by memory from Hynix, Samsung, Micron et al at least for the next 2 years as a build out cycle occurs for manufacturing for these companies.

This means that rather than a single lab buying well ahead, or being able to use all the compute it wants, all the big labs are effectively and increasingly in a compute constrained world. This constraint may end up creating an artificial short-term asymptote on AI model progress. While people will undoubtedly get more efficiency out of the compute they have, this artificial compute constraint may mean no one lab is able to break significantly ahead until 2028 at the soonest - re-enforcing an oligopoly market for LLMs. We may also see the labs “accordion” between allocating compute and human resources to apps vs models and back again. Similarly, the depreciation cycles on chips and systems will be different then everyone expected and the lifetime of silicon will be extended due to lack of sufficient new supply.

The counter to this is algorithmic or other breakthroughs, if contained within a single lab (vs leaking at an SF holiday party attended by researchers) could turbocharge a single company to dominance, particularly if coding takes off and there is some form of ongoing self improvement loops by AI building future AI leading into liftoff. If we do end up with a hard compute constrained environment breakaway liftoff may wait for 2028. Of course, it is also possible we are compute constrained for years post 2028 due to excess demand. Exciting to watch what happens.

Compute (tokens) is the new currency

Compute (or could be stated as tokens) is a new unit of denomination for economic value in silicon valley. Token budget impacts things like

a. What can you accomplish as an engineer

b. Your spend and potential revenue as a company

c. Your business model.

Some companies are effectively inference providers disguised as tools. Neoclouds are the clearest form of this, but things like Cursor similarly are providing cheap inference as a core part of their product offerings and effectively subsidizing compute, which has been a smart user acquisition and usage model. Who doesnt love extra tokens?

Things have gotten to the point where Allbirds (shoe company) just raised a convert to build a GPU farm. Will they be to AI what Microstrategy is to crypto?

Hidden layoffs & the developing world

Most of the “layoffs due to AI” announced so far are probably just companies that overhired during the COVID zero interest rate environment slimming back down. Saying “look how good we are at AI we need fewer people” sounds much better then “we way overhired and are fixing it a few years too late”.

That said, AI is having a real impact in multiple areas such as customer support. Companies that are shrinking teams due to AI are actually cutting outsourcing firms first - so they headcount is not directly on their balance sheet but paid for as a service. This means countries like India and the Philippines may be the most impacted soonest in terms of employment and AI as they house many of these outsourced services organizations.

It also means some developing countries may lose their services ladder to upgrading their economy and work. If AI takes many of the outsourced services jobs first, employment in these economies will need to shift elsewhere. An interesting question is whether this shifts human migration patterns?

Employee headcount is going to flatten for lots of companies and then shrink

Multiple later stage CEOs told me that rather then do big layoffs due to AI, they will just stop growing. So if revenue at the company is growing 30%, 50%, or 100%, headcount may be flat or slightly down as they allow attrition to shrink staff. Existing headcount will become more productive, and companies may start swapping in fewer better people. This may medium term inflate the salaries of the very best people who can leverage AI immensely. Expect hiring to continue in sales, some engineering for growing companies, but maybe not as much elsewhere.

Some companies are starting to ask what is the right ratio of token budget vs salaries in their org? Unclear what the right timeline for this metric is.

True startups (e.g. a 5 person team) in the short run should continue to scale up headcount like in the olden days as they hit product/market fit but just with more leverage per person. So the “flat company” is going to be more of a later stage or public company phenomenon for growing companies in the next 2-4 years. Low growth companies of course should shrink.

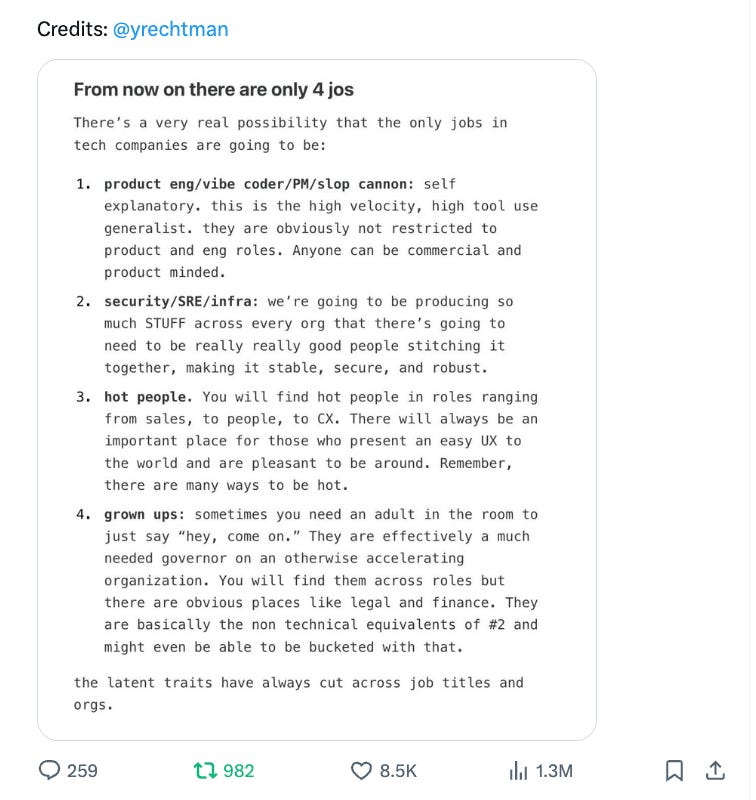

This may have implications for HR/software companies. See also:

The Slop Age could be the golden era of AI x humanity

We are likely in the golden era of AI + humanity. Before the last few years, AI was inaccessible, not very generalizable, and could only do specific tasks. In the future, AI may become superhuman at most tasks and take over a lot of work some people find fun. Today, AI creates useful slop at volume, which means humans are still needed to desloppify the slop, but the slop provides real leverage on time and jobs, which means it is fun to be working right now. If AI displaces people eventually or does more interesting work, this golden moment may fade or change. Is the Slop Age the golden era of humanity + AI?

(One could of course argue that we were in the midst of a human slop era before the AI slop era - in other words the era of huge amounts of human created sloppy content on the internet as it grew to billions of web pages, but not billions of new human insights. Does the slop era end with AGI, or when AGI cleans up all the prior waves of human slop?)

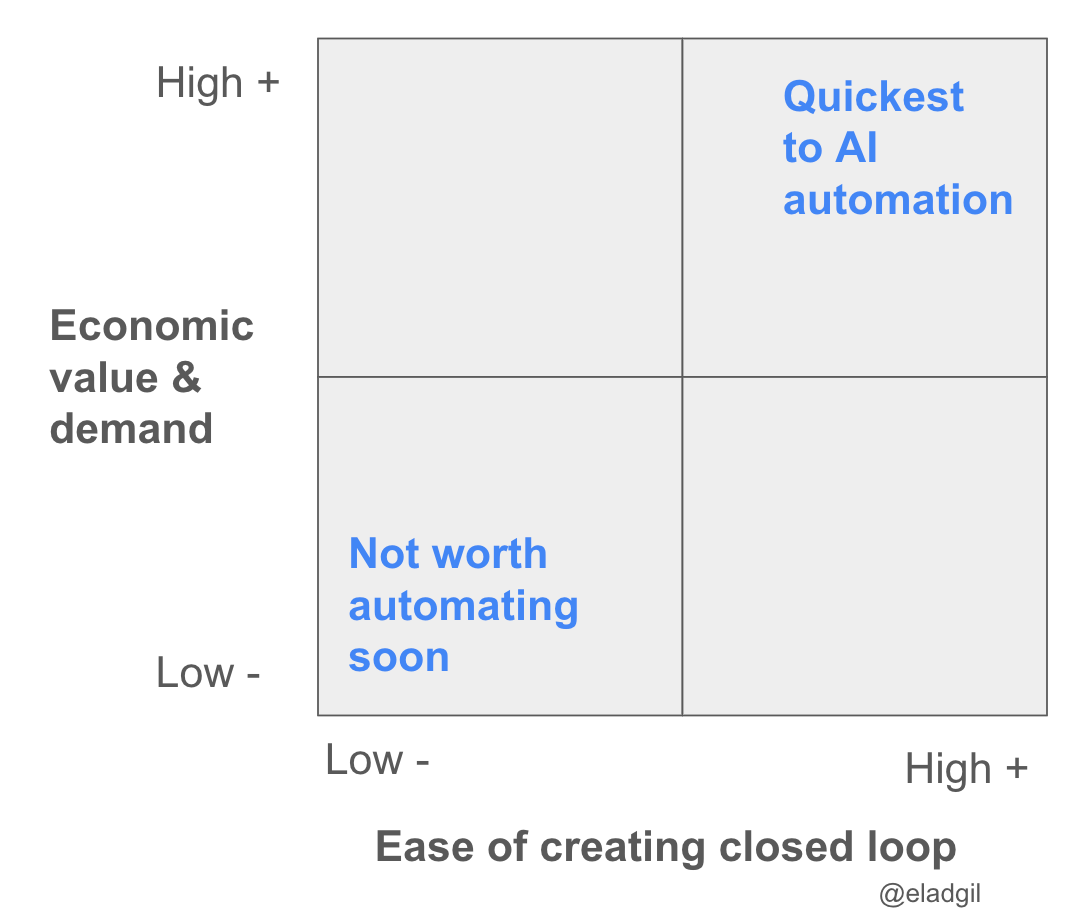

AI will eat closed loops first

AI will first automate away the things that are easier to form a closed loop learning system on. This is why code and AI research may be accelerated and then displaced quickly - you can have testable closed loop systems so machines can learn and iterate quickly. The tighter the closed loop, the faster the AI can learn. You can make a 2x2 of jobs by how closed loop they can be made, versus their economic value, and see where AI may impact labor fastest. Fast time to closed loop + high economic value = fastest AI impact (hence software engineering).

Code is interesting in that there is probably 10-100X the demand for great software developers as there is supply today (hence coding tools doing so well in market). The AI engineer of the future will be managing and orchestrating large numbers of agents to build things (systems and product thinking) vs writing a lot of code themselves (the auto-complete tab era).

An interesting question is what jobs or tasks will be made more closed loop next? Where is AI most embeddable and teachable?

Relatedly, data collection & labelling in every field will continue to grow.

Artisanal engineers vs utility engineers and AI

Deep artisanal “my code is my craft” and “I love creating bespoke things” engineers decreasingly happy in world of AI. Systems thinkers and product thinkers engineers happiest. Many people are a mix of both.

The Harness

If you look at the use of AI coding tools, the harness (and broader product surface area eg UX, workflow, etc) seems to be increasingly sticky in the short term. It is not just the model you use, but the environment, prompting, etc you build around it that helps impact your choice. Brand also matters more then many people think. At some point, either one coding model breaks very far ahead, or they stay neck in neck. How important is the harness/workflow long term for defensibility for coding or enterprise applications?

Products tend to not be sticky until they suddenly are very sticky.

There will be variability in where future forms of harnesses matter vs not. What is the sales AI harness? The AI architect harness? This leaves room for some startups to thrive.

Selling work, not software. Units of labor as the product

AI is about selling units of labor online (and eventually in the atomic world via robotics), not displacing software. While Zendesk was selling seats to customer support reps, Decagon and Sierra sell customer support agentic work output and labor.

AI grows tech TAMs dramatically.

Most AI companies should consider exiting in the next 12-18 months

In the Internet era of 1995-2001, roughly 2000 or so companies went public. Of these only a dozen or two survived. Similarly in the AI era, most companies, including those that are ramping revenue today, will see the market, competition, and adoption, turn on them.

Founders running successful AI companies should all take a cold hard look at exiting in the next 12-18 months, which may be a value maximizing moment for outcomes. A handful of companies should absolutely not exit (eg OpenAI, Anthropic) but many should if they can while everything is on the upswing.

This is all of course counterbalanced by enormous growing demand for AI services of all types. While the tide is rising, many companies will seem to be unstoppable and durable - whether they are or not in the long run remains to be seen.

Anti-AI regulation & violence will both increase

AI has had very little real world impact to eg job displacement so far. However, some AI pundits and some leaders have been quite vocal and doomer-esque to the point where a strong anti-AI narrative is emerging in both politics (Maine just banned new data centers although this also ties into energy, jobs, and NIMBYism) and amongst violence-centric activists (see recent attack on Sam Altman). Expect this to increase dramatically. It would be great if more leaders in AI continue to emphasize the optimistic side of what is coming in public rhetoric and political lobbying. In general, the AI field would benefit from its leaders continue to work actively on reigning in the doom and gloom.

Other

Any other random thoughts to consider? Ping me on X.

Thanks to Aravind Srinivas of Perplexity, Scott Wu of Cognition, Adam d’Angelo of Quora/Poe, and others for comments.

OTHER POSTS

My book: High Growth Handbook. Amazon. Online.

Markets:

Firesides & Podcasts

Startup life

Co-Founders

Raising Money