AI Regulation

There have been multiple call to regulate AI. It is too early to do so.

[While I was finalizing this post, Bill Gurley gave this great talk on incumbent capture and regulation].

ChatGPT has only been live for ~9 months and GPT-4 for 6 or so months. Yet there have already been strong calls to regulate AI due to misinformation, bias, existential risk, threat of biological or chemical attack, potential AI-fueled cyberattacks etc without any tangible example of any of these things actually having or happened with any real frequency compared to existing versions without AI. Many, like chemical attacks are truly theoretical without an ordered logic chain of how they would happen, and any explanation as to why existing safegaurds or laws are insufficient.

Sometimes, regulation of an industry can be positive for consumers or businesses. For example, FDA regulation of food can protect people from disease outbreaks, chemical manipulation of food, or other issues.

In most cases, regulation can be very negative for an industry and its evolution. It may force an industry to be government-centric versus user-centric, prevent competition and lock in incumbents, move production or economic benefits overseas, or distort the economics and capabilities of an entire industry.

Given the many positive potentials of AI, and the many negatives of regulation, calls for AI regulation are likely premature, but also in some cases clearly self serving for the parties asking for it (it is not surprising the main incumbents say regulation is good for AI, as it will lock in their incumbency). Some notable counterexamples also exist where we should likely regulate things related to AI, but these are few and far between (e.g. export of advanced chip technology to foreign adversaries is a notable one).

In general, we should not push to regulate most aspects of AI now and let the technology advance and mature further for positive uses before revisiting this area.

First, what is at stake? Global health & educational equity + other areas

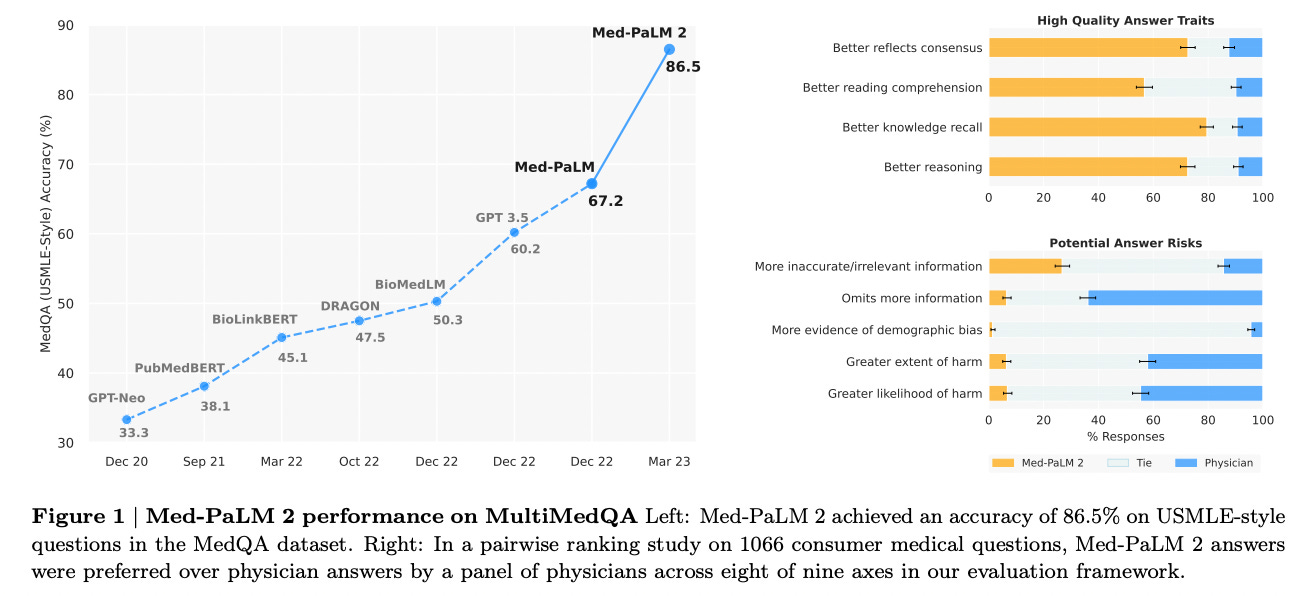

Too little of the dialogue today focuses on the positive potential of AI (I cover the risks of AI in another post.) AI is an incredibly powerful tool to impact global equity for some of the biggest issues facing humanity. On the healthcare front, models such as Med-PaLM2 from Google now outperform medical experts to the point where training the model using physician experts may make the model worse.

Imagine having a medical expert available via any phone or device anywhere in the world - to which you can upload images, symptoms, and follow up and get ongoing diagnosis and care. This technology is available today and just need to be properly bundled and delivered in a bundled and thoughtful way.

Similarly, AI can provide significant educational resources globally today. Even something as simple as auto-translating and dubbing all the educational text, video or voice content in the world is a straightforward task given todays language and voice models. Adding a chat like interface that can personalize and pace the learning of the student on the other end is coming shortly based on existing technologies. Significantly increasing global equity of education is a goal we can achieve if we allow ourselves to do so.

Additionally, AI can also play a role in other areas including economic productivity, national defense (covered well here), and many other areas.

AI is the likely the single strongest motive force towards global equity in health and education in decades. Regulation is likely to slow down and confound progress towards these, and other goals and use cases.

Regulation tends to prevent competition - it favors incumbents and kills startups

In most industries, regulation prevents competition. This famous chart of prices over time reflects how highly regulated industries (healthcare, education, energy) have their costs driven up over time, while less regulated industries (clothing, software, toys) drop costs dramatically over time. (Please note I do not believe these are inflation adjusted - so 60-70% may be “break even” pricing inflation adjusted).

Regulation favors incumbents in two ways. First, it increase the cost of entering a market, in some cases dramatically. The high cost of clinical trials and the extra hurdles put in place to launch a drug are good examples of this. A must-watch video is this one with Paul Janssen, one of the giants of pharma, in which he states that the vast majority of drug development budgets are wasted on tests imposed by regulators which “has little to do with actual research or actual development”. This is a partial explanation for why (outside of Moderna, an accident of COVID), no $40B+ market cap new biopharma company has been launched in almost 40 years (despite healthcare being 20% of US GDP).

Secondly, regulation favors incumbents via something known as “regulatory capture”. In regulatory capture, the regulators become beholden to a specific industry lobby or group - for example by receiving jobs in the industry after working as a regulator, or via specific forms of lobbying. There becomes a strong incentive to “play nice” with the incumbents by regulators and to bias regulations their way, in order to get favors later in life.

Regulation often blocks industry progress: Nuclear as an example.

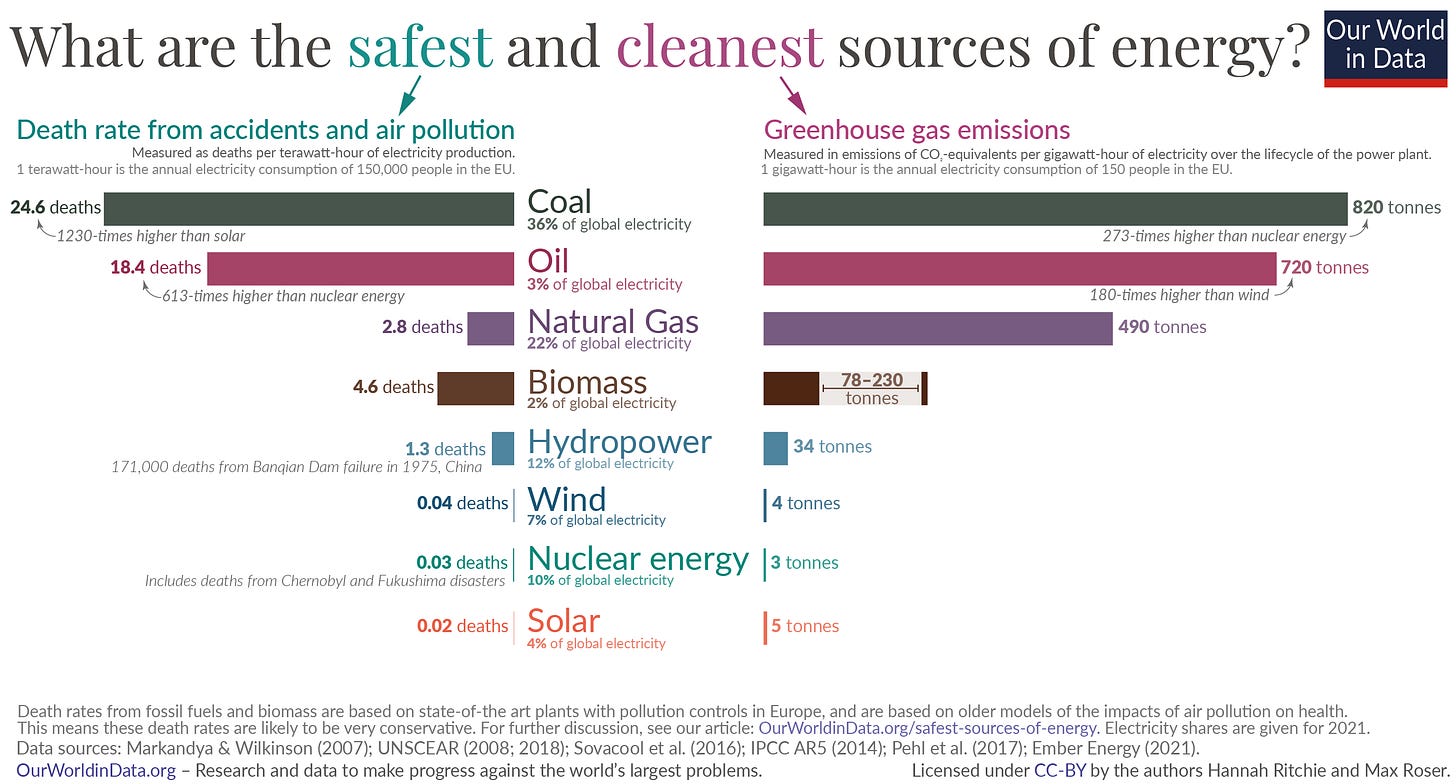

Many of the calls to regulate AI suggest some analog to nuclear. For example, a registry of anyone building models and then a new body to oversee them. Nuclear is a good example of how in some cases regulators will block the entire industry they are supposed to watch over. For example, the Nuclear Regulatory Commission (NRC), established in 1975, has not approved a new nuclear reactor design for decades (indeed, not since the 1970s). This has prevented use of nuclear in the USA, despite actual data showing high safety profiles. France meanwhile has continued to have 70% of its power generated via nuclear, Japan is heading back to 30% with plans to grow to 50%, and the US has been declining down to 18%.

This is despite nuclear being both extremely safe (if one looks at data) and clean from a carbon perspective.

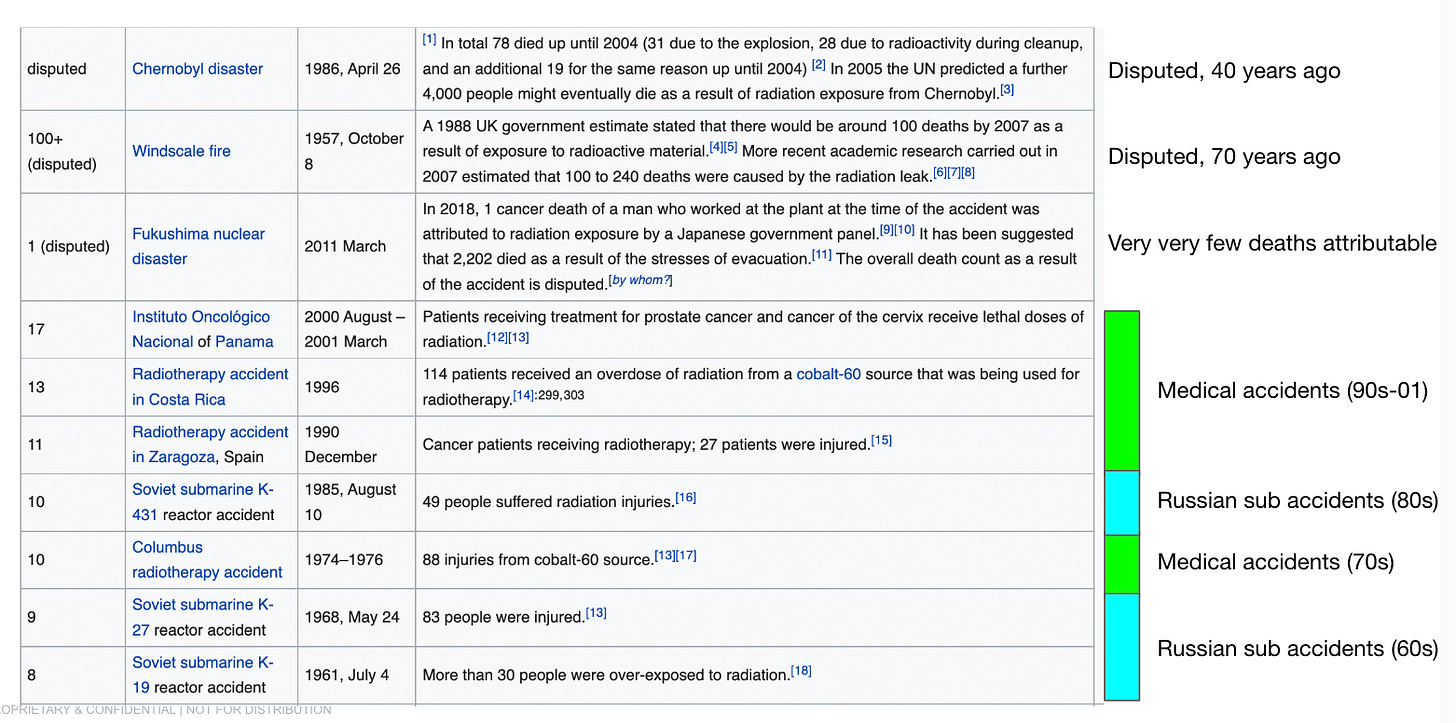

Indeed, most deaths from nuclear in the modern era have been from medical accidents or Russian sub accidents. Something the actual regulator of nuclear power seem oddly unaware of in the USA.

Nuclear (and therefore Western energy policy) is ultimately a victim of bad PR, a strong eco-big oil lobby against it, and of regulatory constraints.

Regulation can drive an industry overseas

I am a short term AI optimist, and a long term AI doomer. In other words, I think the short term benefits of AI are immense, and most arguments made on tech-level risks of AI are overstated. For anyone who has read history, humans are perfectly capable of creating their own disasters. However, I do think in the long run (ie decades) AI is an existential risk for people. That said, at this point regulating AI will only send it overseas and federate and fragment the cutting edge of it to outside US jurisdiction. Just as crypto is increasingly offshoring, and even regulatory-compliant companies like Coinbase are considering leaving the US due to government crackdowns on crypto, regulating AI now in the USA will just send it overseas.

The genie is out of the bottle and this technology is clearly incredibly powerful and important. Over-regulation in the USA has the potential to drive it elsewhere. This would be bad for not only US interests, but also potentially the moral and ethical frameworks in terms of how the most cutting edge versions of AI may get adopted. The European Union may show us an early form of this.

Regulation can distort economics, drive cost way up, and slow down progress for important societal areas: Healthcare as an example.

Regulation tends to distort the economics of an industry. Healthcare is a strong example where the person who benefits (the patient) is different from the person who decides what to get (the doctor), what can be paid for (the insurer) and what the market can even have to begin with (the regulator). This has caused a lack of competition for many parts of the healthcare industry, an inability to launch valuable products quickly, and in some cases, prevention of adoption by valuable products (due to lack of a payor).

It is telling that during COVID, when regulations were decreased, we had a flurry of vaccines developed in less than a year and multiple drugs tested and launched in anywhere from a few months to two years. All this was done with minimal patient side effects or bad outcomes. This similarly happened during WW2 when Churchill wanted to find a treatment for soldiers in the field with gonnorhea and made it a national mandate to find a cure. Penicillin was rediscovered and launched to market in less than 9 months.

Who do you want to make decisions on the future? Tech people are naive about “regulation”

Most people in tech have never had to deal with regulators. If you worked at Facebook in the early days or on a SaaS startup, the risk of regulation has likely not come up. Those who have dealt with regulators (such as later Meta employees or people who have run healthcare companies) realize that there tend to be many drawbacks to working in a regulated industry. Obviously, there are some positives from regulators when said regulators are functioning well and focused on their mission - e.g. data-driven prevention of consumer harm, in a way that does not overstep the legal frameworks of the country.

In many discussions I have had with tech people who call for regulation of AI, a few things have come out:

Many people working in AI think deeply and genuinely about what they are working on, and want it to be very positive for the world. Indeed, the AI industry and its early emphasis on safety and alignment strikes me as the most forward looking group I have seen in tech on the implications of their own technology. However….

…Most people calling for regulation have never worked in a regulated industry nor dealt with regulators. Many of their viewpoints on what “regulation” means is quite naive.

For example, a few people have told me they think regulation means “the government will ask a group of the smartest people working on AI to come together to set policy”. This seems to misunderstand a few basics of regulation - for example the regulator may actually not understand much about the topic they are regulating, or be driven politically versus factually. Indeed, recent example of “AI experts” consulting on regulation tend to have standard political agendas, versus being giants in the AI technology world.

Most people do not understand that most “regulators” have varying internal viewpoints and the group you interact with within a regulator may lead to a completely different outcome. For example, depending on which specific subgroup you engage with at the FDA, SEC, FTC, or other group, you may end up with a very different result for your company. Regulators are often staffed by people with their own motivations, political viewpoints, and career aspirations. This impacts how they work with companies and the industries they regulate. Many regulators are hired later in their careers in to the larger companies they regulated - which is part of what causes regulatory capture over time.

There seems to be a lack of appreciation for existing legal frameworks. There are laws and precedents that have been built up over time to cover many aspects of harm that may be caused by the short run due to AI (hate speech, misinformation, and other “trust and safety issues” on the one hand, or use of AI to cause cyber attacks or physical harm). These existing legal frameworks seem ignored by many of the people calling for regulation - many of whom do not seem to have any real knowledge of what laws already exist.

Many people misunderstand that the political establishment would like nothing more than to seize more power over tech. The game in tech is often around impact and financial outcomes, while the game in DC is about power. Many who seek power would love to have a way to take over and control tech. Calling for AI regulation is creating an opening to seize broader power. As we saw with COVID policies, the “slippery slope” is real.

It is worth pausing to ask yourself who do you want setting norms for the AI-industry - the CEOs and researchers behind the main AI companies (AKA self-regulation) or unelected government officials (see e.g. current actions against crypto, and tech M&A). Which will lead to a worse outcome for AI and society?

A good question to ask yourself about regulation given recent times may include questions like - Do you think the current regulators have dealt well recently with inflation, interest rates, COVID policy, drug regulation and speed of developing life saving drugs, local policies on crime and drug use, or other issues? What primary data did you use to decide if these outcomes were good or bad or avoidable or not? This may be a litmus test for many other aspects of ones viewpoints.

Short term policy & what should be regulated?

There are some areas that seem reasonable to regulate for AI in the short run - but these should be highly targeted to pre-existing policies. For example:

Export controls. There are some things that make sense to regulate for AI now - for example the export of advanced semiconductor technology manufacturing has been, and should continue to have export controls.

Incident reporting. Open Phil has a good position excerpted here similar to incident reporting requirements in other industries (e.g. aviation) or to data breach reporting requirements, and similar to some vulnerability disclosure regimes. Many incidents wouldn’t need to be reported publicly, but could be kept confidential within a regulatory body. The goal of this is to allow regulators and perhaps others to track certain kinds of harms and close-calls from AI systems, to keep track of where the dangers are and rapidly evolve mitigation mechanisms.”

Other areas. There may be other areas that may make sense over time. Many of the areas people express strong concern for (misinformation, bias etc) have long standing legal and regulatory structures in place already.

It should be noted that I do think the short term risks of AI are overstated, long term risks may be understated. I think we should regulate AI at the moment in time where we think it represent actual existential risk, versus “more of the same” for what humanity has done in the past with or without technologies.

The first “AI election”

The 2024 presidential election may end up being our first “AI election” - in that many new generative AI technologies will likely be used at mass scale for the first time in election. Examples of use may include things like:

Personalized text to speech for large scale, personalized robo-dialing in the natural sounding voice of the candidates

Large scale content generation and farming for social media and other targeting

Deep fakes of video or other content

Whether AI actually ends up impacting the election or not, it may still be blamed for whatever outcome happens similar to social networks being blamed for the 2016 outcome. The election may be used by groups as an excuse to regulate AI.

To sum it all up…

Regulation tends to squeeze much of the innovation and optimism out of an industry. It is no mistake that many companies stop innovating when the baleful eye of a regulator settles upon them. Examples from healthcare and biopharma, nuclear, and crypto all suggest regulation can stop or slow innovation significantly, cause offshoring of industries, and derail the positive purpose of an industry. Given the huge potential of AI for healthcare, education, and other basic areas of global equity, it is better to hold off on regulating AI for now.

MY BOOK

You can order the High Growth Handbook here. Or read it online for free.

OTHER POSTS

Firesides & Podcasts

Markets:

Startup life

Co-Founders

Raising Money

Old Crypto Stuff: